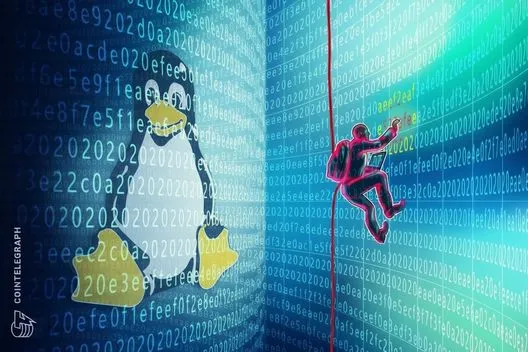

In a significant move to enhance user safety, X has announced its new commitments aimed at curbing illegal hate and terror content on its platform in the UK. This decision comes in the wake of increased scrutiny from British online safety regulator Ofcom, which has identified persistent issues regarding the handling of such damaging materials.

Under a newly forged agreement revealed today, X aims to prevent access to accounts flagged for posting illegal terrorist content—specifically, those linked to UK-based terror groups. The platform has committed to review a minimum of 85% of reported hate and terror content within 48 hours, a target that raises the stakes for its content moderation processes.

In addition to these pledges, X plans to collaborate with experts to refine its reporting systems concerning illegal content and will provide quarterly performance data to Ofcom over the next year to ensure compliance. "These commitments represent a step forward, but progress must continue," stated Oliver Griffiths, Ofcom’s online safety director. He emphasized that the evidence suggests that terrorist content and hateful speech remain prevalent on several leading social media platforms.

The examination of X is part of a broader compliance inquiry initiated by Ofcom in December, aimed at investigating whether social media platforms possess adequate measures to address illegal hate and terror content. Griffiths also noted that a separate investigation into a troublesome chatbot feature on X, which controversially used AI inappropriately, remains ongoing.

While these commitments establish a framework for potential sanctions against X for non-compliance in the future, Ofcom has refrained from holding the platform accountable for past infractions related to the UK’s online safety regulations. Notably, the commitments lack specificity regarding proactive measures for identifying illegal content, leaving uncertainty about whether content will be assessed by automated technologies or human moderators. As user concerns mount over the adequacy of X’s safety measures, the platform’s moderation team currently faces scrutiny over its diminishing size.

This latest development reflects a continuing push for stricter regulations governing online content and the responsibility of social media platforms to ensure a safer digital environment for users in the UK.

Source: The Verge